Graph Convolutional Tracking

Junyu Gao

Tianzhu Zhang

Changsheng Xu

|

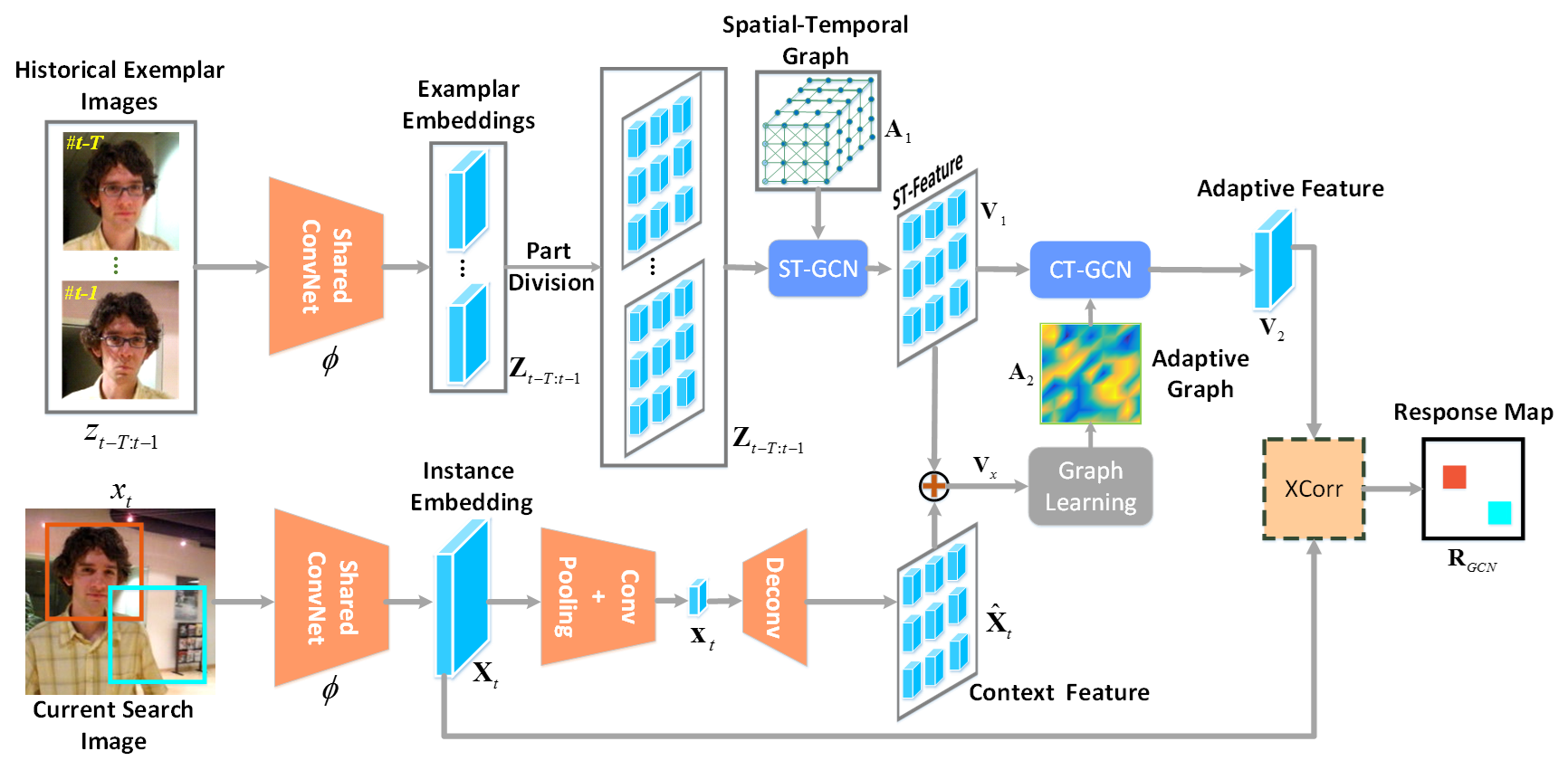

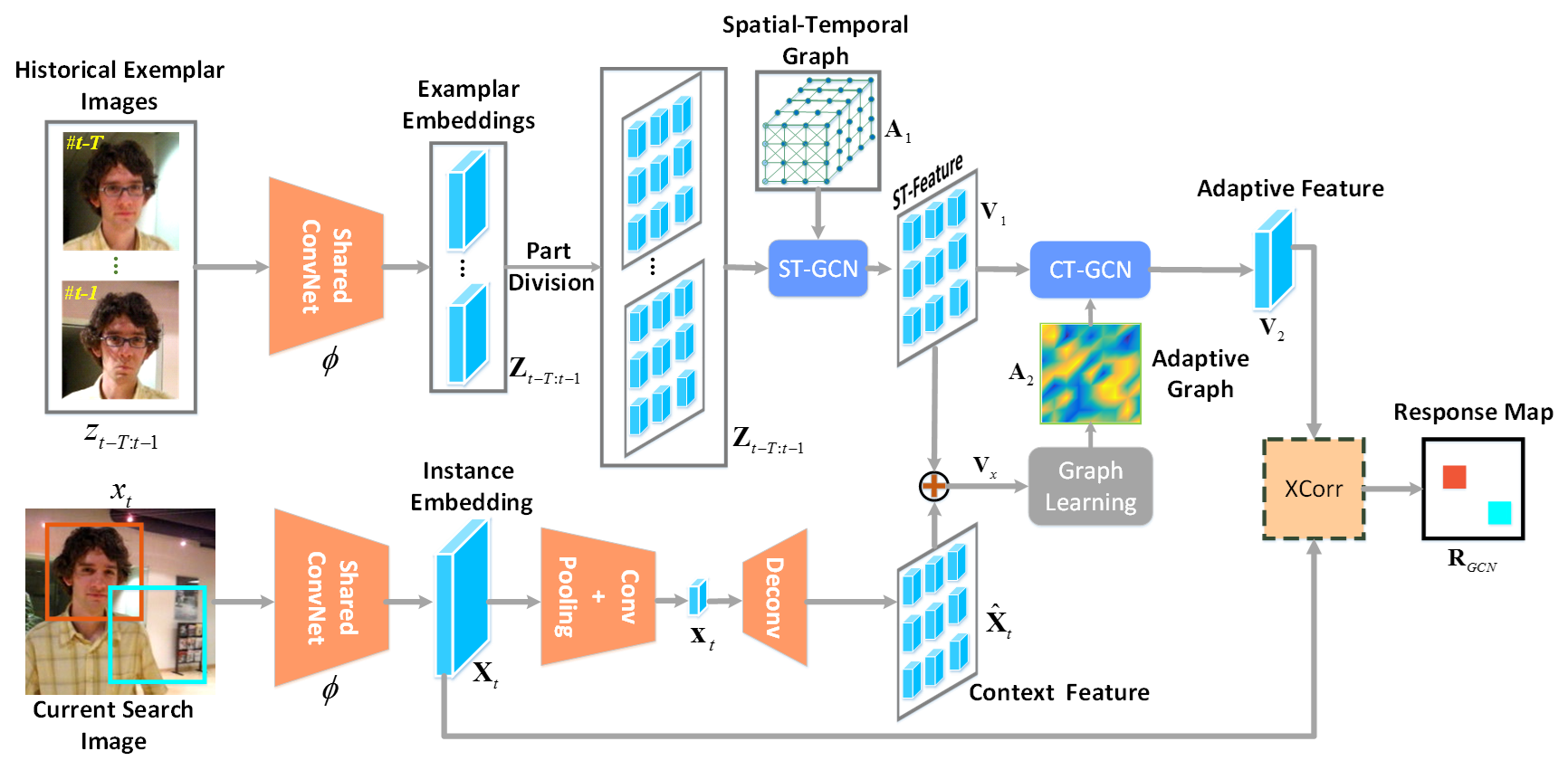

Figure 1. The pipeline of our GCT, which can jointly perform spatial-temporal target appearance modeling

and context-guided feature adaption in a siamese framework.

Specifically, we use a ST-GCN to model the historical exemplars with a spatial-temporal graph.

Then, the generated ST-feature is combined with the current context feature to learn

an adaptive graph, which is used by CT-GCN to produce the adaptive feature.

This feature is evaluated on the search image embedding via a cross-correlation

layer (XCorr) for target localization. |

Abstract

Tracking by siamese networks has achieved favorable

performance in recent years. However, most of existing siamese methods

do not take full advantage of spatial-temporal target appearance modeling

under different contextual situations. In fact, the spatial-temporal information

can provide diverse features to enhance the target representation, and the

context information is important for online adaption of target localization.

To comprehensively leverage the spatial-temporal structure of historical

target exemplars and get benefit from the context information, in this work,

we present a novel Graph Convolutional Tracking (GCT) method for

high-performance visual tracking.

Specifically, the GCT jointly incorporates two types of Graph Concolutional Networks (GCNs) into a

siamese framework for target appearance modeling. Here, we adopt a spatial-temporal GCN to model the

structured representation of historical target exemplars. Furthermore, a context GCN is designed

to utilize the context of the current frame to learn adaptive features for target localization.

Extensive results on $4$ challenging benchmarks show that our GCT method

performs favorably against state-of-the-art trackers while running around $50$

frames per second.

Related Publications

Citing

@inproceedings{gao2019gct_cvpr,

Author = {Gao, Junyu and Zhang, Tianzhu and Xu, Changsheng},

Title = {Graph Convolutional Tracking},

booktitle = {CVPR},

Year = {2019}

}

Experimental Results

|

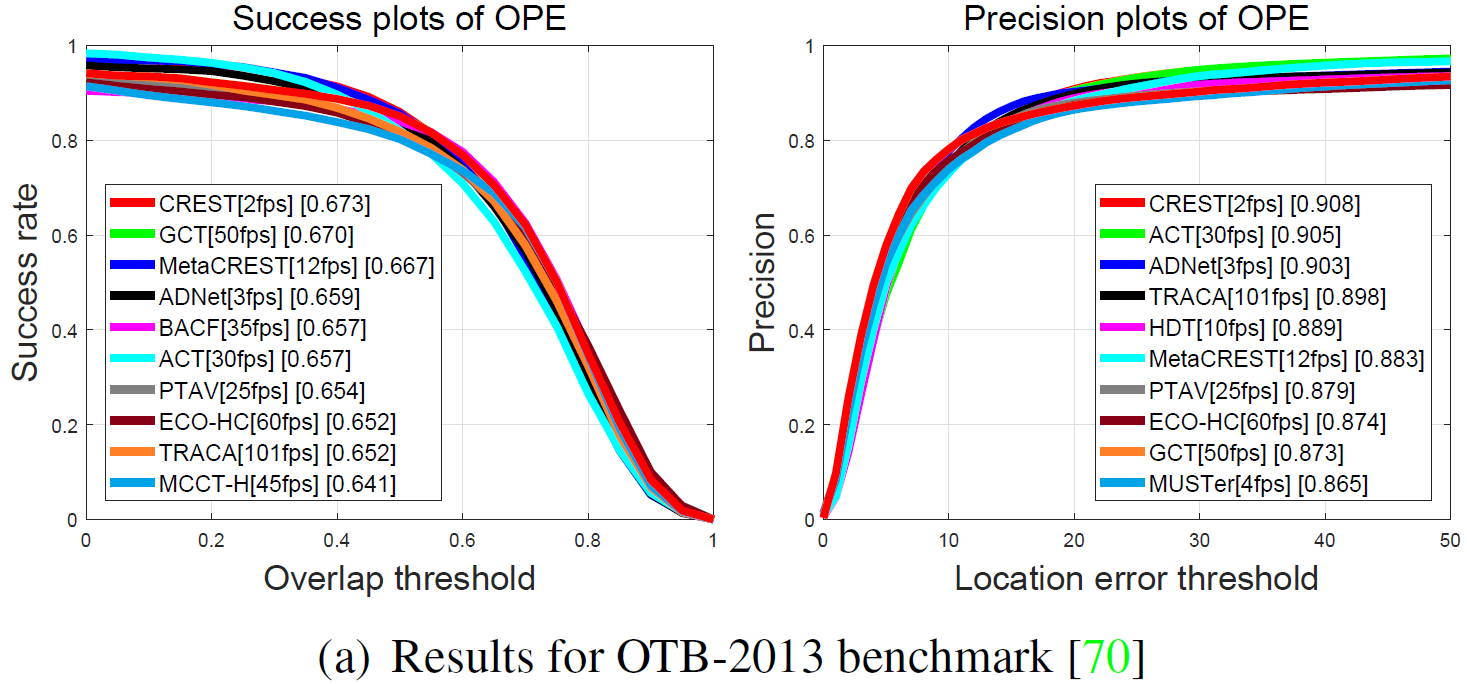

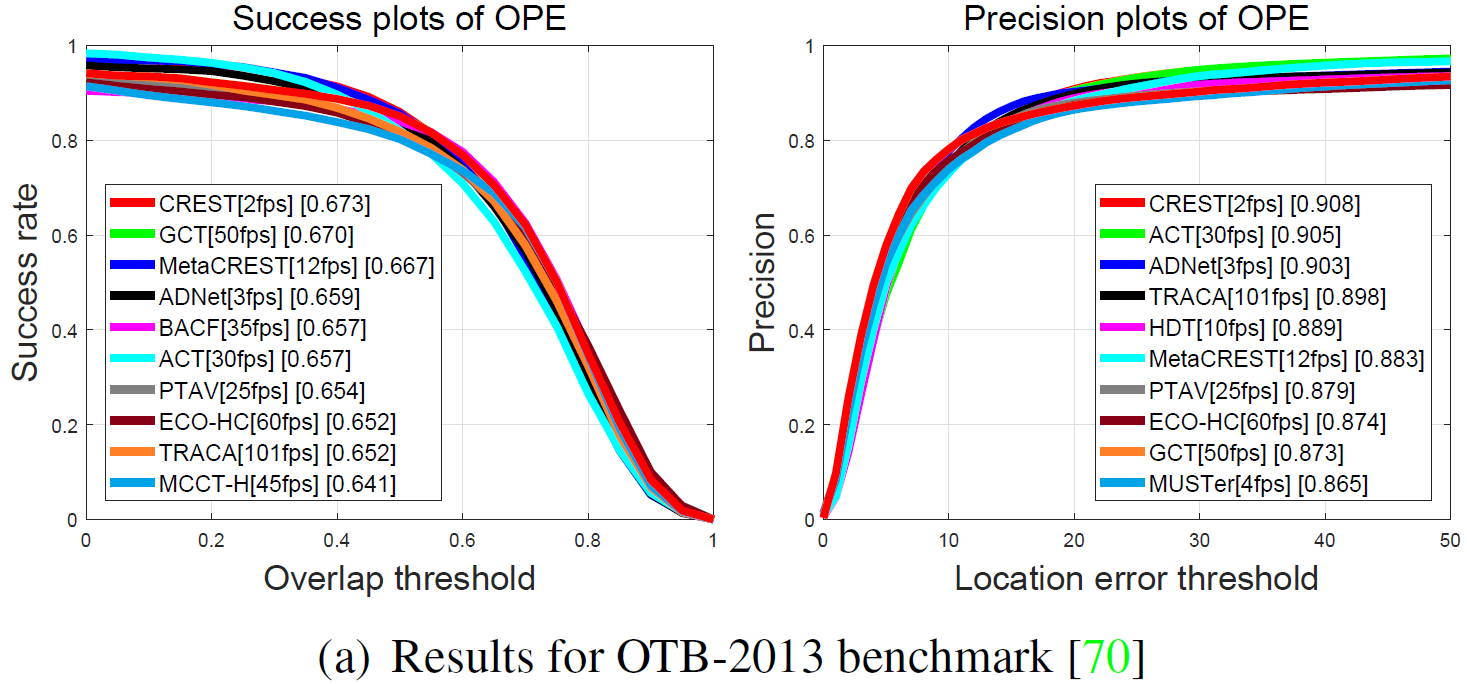

Figure 2. Precision and success plots over all the 50 sequences

using one-pass evaluation on the OTB-2013 Dataset. The legend

contains the area-under-the-curve score and the average distance

precision score at 20 pixels for each tracker. Our GCT method

performs favorably against the state-of-the-art trackers. |

|

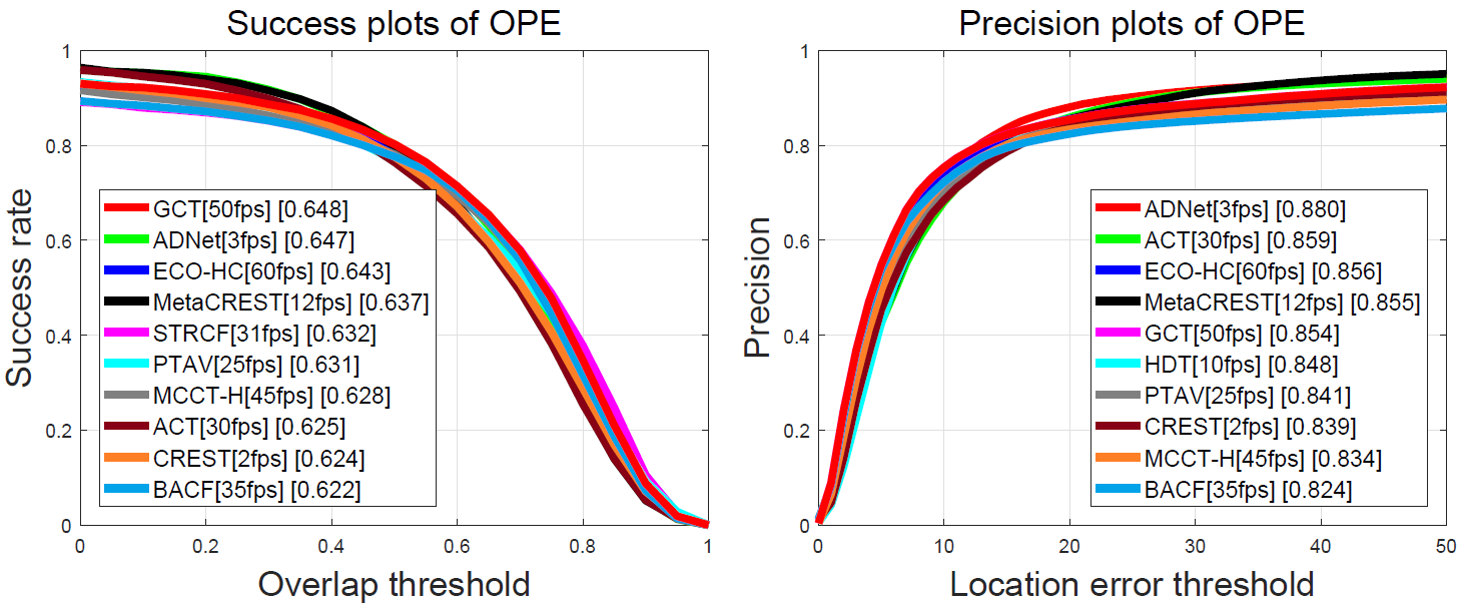

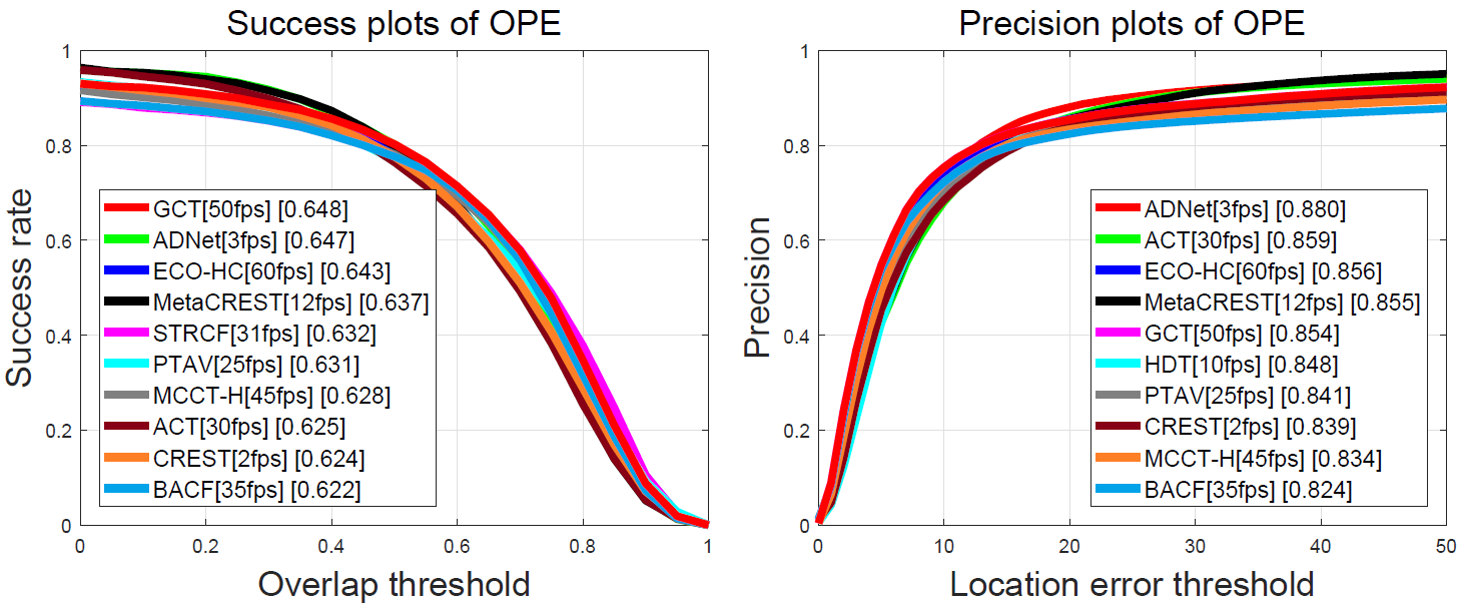

Figure 3. Precision and success plots over all 100 sequences using

one-pass evaluation on the OTB-2015 dataset. The legend contains

the area-under-the-curve score and the average distance precision

score at 20 pixels for each tracker. Our GCT method performs

favorably against the state-of-the-art trackers. |

|

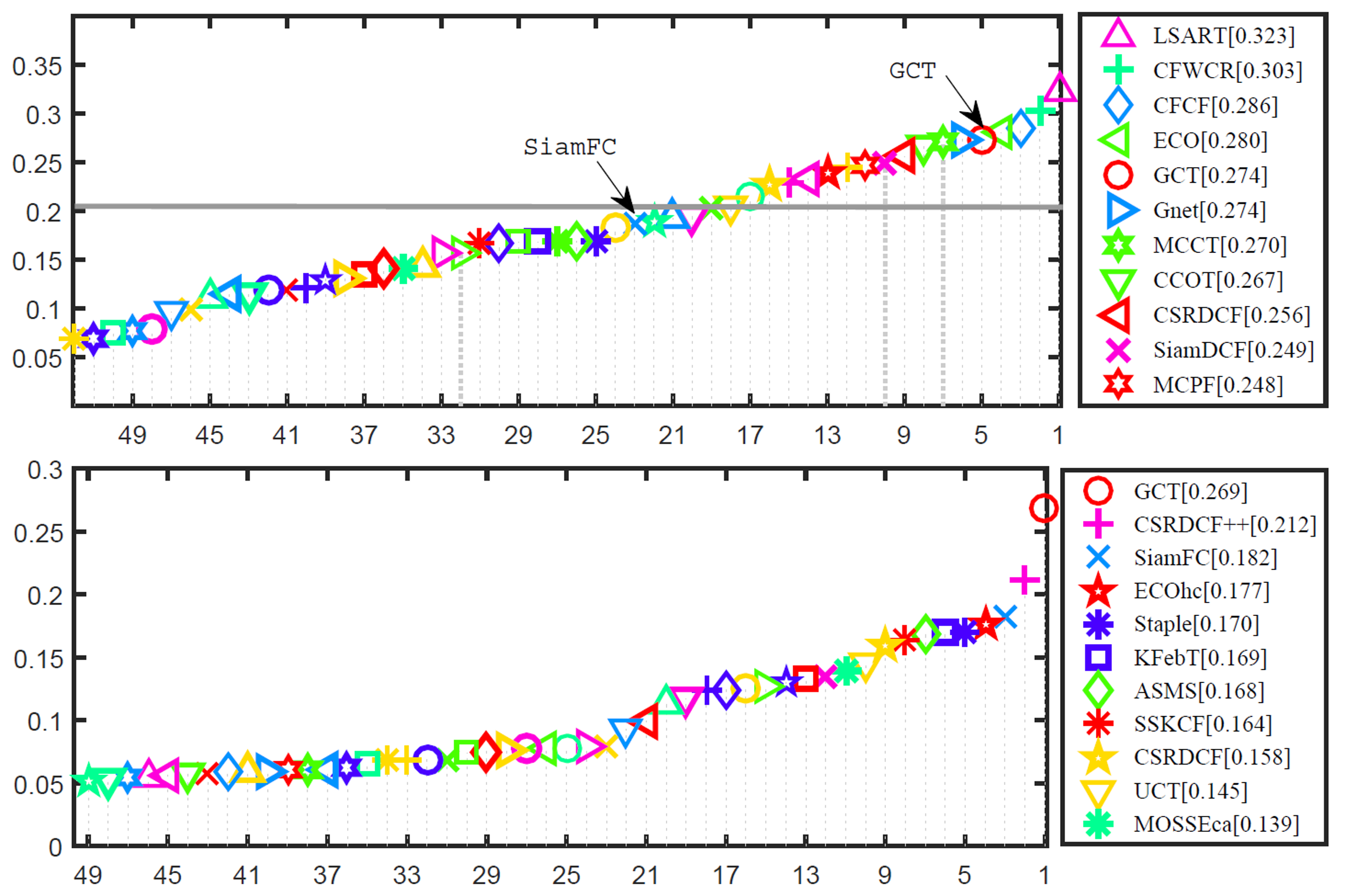

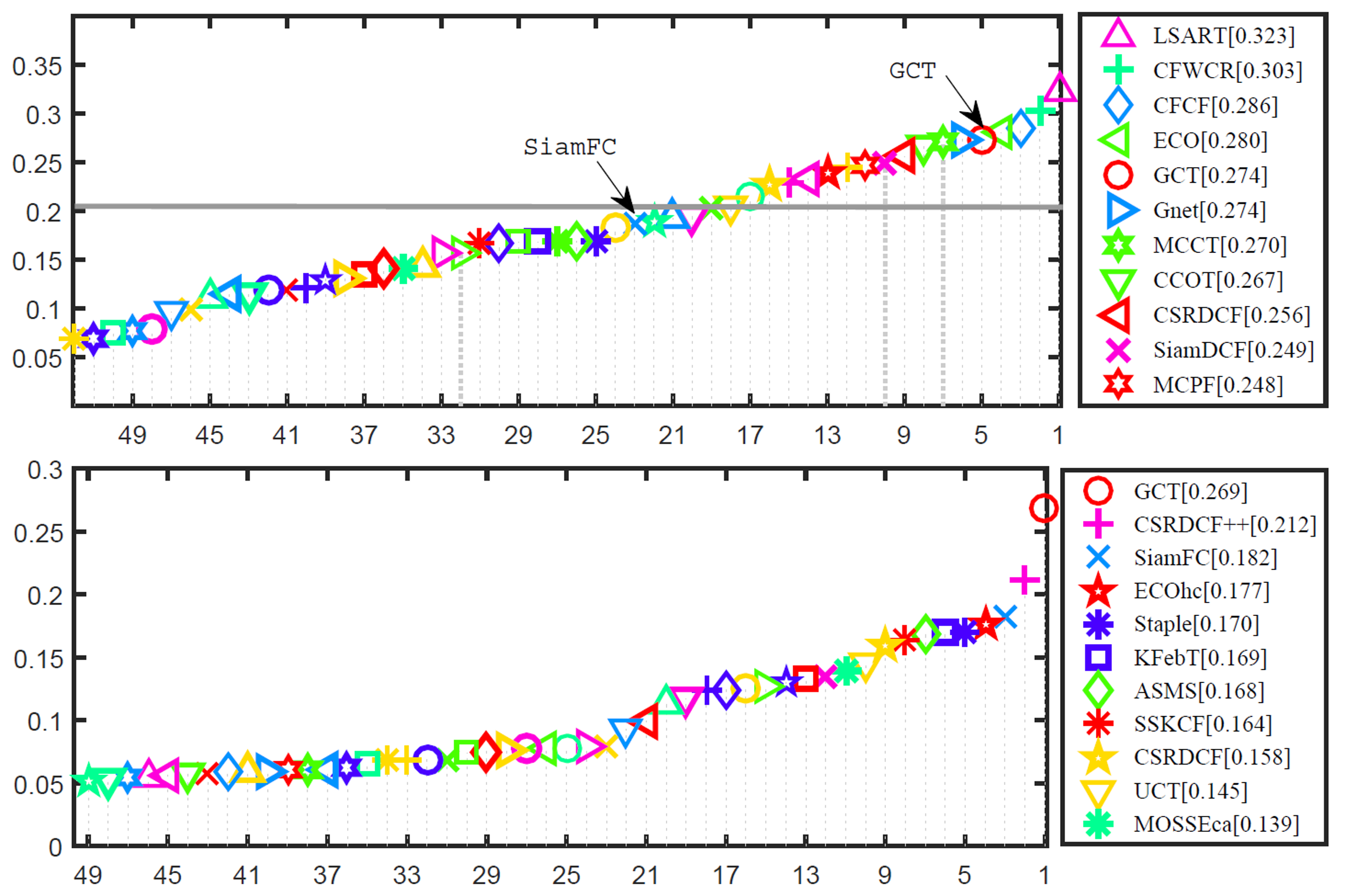

Figure 4. Precision and success plots over the 123 sequences

using one-pass evaluation on the UAV dataset. The legend

contains the area-under-the-curve score and the average distance

precision score at 20 pixels for each tracker. Our GCT method

performs favorably against the state-of-the-art trackers. |

|

Figure 4. Comparison of EAO scores on VOT2017

challenge and real-time experiment. Our GCT method performs favorably against the state-of-the-art trackers. |

Thank you for visiting my project!